Summary (Headline numbers)

- +30.2 points H-mean on the NomNaOCR test set: 0.731 → 0.952.

- Precision 0.966, Recall 0.937 after fine-tuning PP-OCRv5 (

PP-OCRv5_server_det).- 3,411 manuscript pages curated from NomNaOCR + the CWKB Korean Buddhist canon, scraped by a 16-worker Goroutine pipeline.

- 2× NVIDIA T4 distributed training on Kaggle, 100 epochs, peak validation H-mean at epoch 64.

Introduction

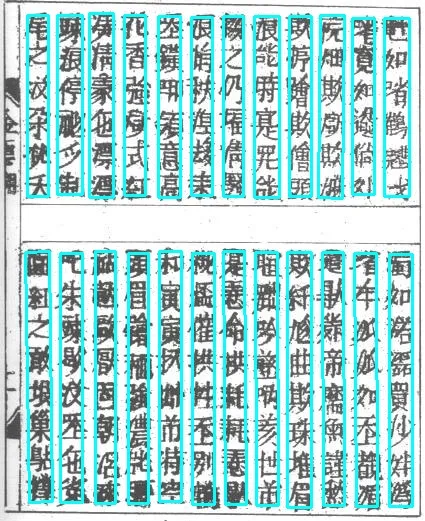

Sino-Nom (chữ Hán-Nôm) is the script that recorded over a thousand years of Vietnamese history, literature, and Buddhist commentary before the Latin-alphabet quốc ngữ took over. Today, almost no native readers remain, and the surviving manuscripts are degraded — bleed-through ink, wormholes, faded strokes, and slanted columns that defeat off-the-shelf OCR.

This project tackled the detection sub-problem (find every character bounding box) on two large-scale, real-world Sino-Nom corpora.

Note (Scope of this report)

We focus on the text detection stage of the OCR pipeline (input: page image, output: oriented bounding boxes around every character or character cluster). Recognition (image → unicode) is the next module and not covered here.

Datasets

We unified two heterogeneous corpora into a single PaddleOCR-compatible split.

NomNaOCR — Vietnamese Sino-Nom canon

NomNaOCR is the largest open Sino-Nom OCR dataset for Vietnam, built from three landmark works:

- Lục Vân Tiên (Nguyễn Đình Chiểu)

- Truyện Kiều (Nguyễn Du, 1866–1872 editions)

- Đại Việt Sử Ký Toàn Thư (the official imperial chronicle)

Total: 2,953 hand-written manuscript pages sourced from the Vietnamese Nôm Preservation Foundation.

CWKB — Complete Works of Korean Buddhism

The CWKB archive covers Korean Buddhist literature from the Silla through Joseon dynasties. The site exposes pages through paginated viewers, not bulk downloads, so we built a custom scraper.

4 collapsed lines

package main

import ( "context" "sync")

const workerCount = 16

func crawl(ctx context.Context, urls <-chan string, out chan<- Page) { var wg sync.WaitGroup for i := 0; i < workerCount; i++ { wg.Add(1) go func() { defer wg.Done() for u := range urls { page, err := fetchAndParse(ctx, u) if err != nil { continue } out <- page } }() } wg.Wait() close(out)}The Goroutine pool gave us ~4× wall-clock speedup over a sequential Python requests baseline at the same TCP connection budget — I/O-bound scraping is exactly where Go’s lightweight concurrency shines.

Final split

After standardizing filenames to <book>_<page>.jpg and writing PaddleOCR-style det_gt.txt labels, we kept NomNaOCR’s original validation set as the held-out test set, then mixed the rest with all CWKB images and re-split 80/20.

| Split | Images | Source |

|---|---|---|

| Train | 2,253 | NomNaOCR (train) ∪ CWKB, mixed and shuffled |

| Validation | 564 | 20% holdout of the same pool |

| Test | 594 | NomNaOCR original validation set, untouched |

Keeping the test split untouched ensures all reported metrics are comparable to other NomNaOCR baselines.

Architecture

We fine-tuned PP-OCRv5_server_det — the higher-capacity variant of PaddleOCR 3.0’s text-detection family — built around the Differentiable Binarization (DB) algorithm.

Components

- Backbone:

PP-HGNetV2_B4, knowledge-distilled fromGOT-OCR2.0. - Neck:

LKPAN(Large Kernel Path Aggregation Network), 256 output channels, fuses multi-scale features. - Head:

PFHeadLocal(Parallel Fusion Head) with for the differentiable binarization step.

DBLoss formulation

Detection is supervised by a weighted combination of a probability map loss and a threshold map loss:

with and as the original PP-OCRv5 recipe.

Solution (Why OHEM 3:1 matters here)

Sino-Nom pages have a high background-to-character pixel ratio. We kept Online Hard Example Mining at the default 3:1 negative-to-positive sampling, which forces the model to spend its gradient budget on the difficult faded strokes instead of trivial whitespace. Removing OHEM in an ablation knocked H-mean down by ~3 points.

Hyperparameter Setup

The default PP-OCRv5 recipe transferred surprisingly well — only epochs needed lowering. Full configuration:

| Group | Setting |

|---|---|

| Optimizer | Adam (, ); LR 0.001, Cosine Annealing + 2 warm-up epochs |

| Regularizer | L2, factor |

| Loss | DBLoss ( Dice + BCE), OHEM ratio 3:1 |

| Augmentation | Random crop to 640 × 640, rotation [-10°, +10°], scale [0.5, 3.0], horizontal flip |

| Post-proc | Binary threshold , box filter 0.6, region expand ratio 1.5 |

| Epochs | 100 (down from default 500) — enough thanks to a strong pretrained init |

| Init | PP-OCRv5_server_det_pretrained.pdparams, full fine-tune (Backbone + Neck + Head all updated) |

Optimizer: name: Adam beta1: 0.9 beta2: 0.999 lr: name: Cosine learning_rate: 0.001 warmup_epoch: 2 regularizer: name: L2 factor: 1.0e-06Loss: name: DBLoss balance_loss: true main_loss_type: DiceLoss alpha: 5 beta: 10 ohem_ratio: 3PostProcess: name: DBPostProcess thresh: 0.3 box_thresh: 0.6 unclip_ratio: 1.5Training

Training ran on Kaggle’s free tier:

- GPU: 2× NVIDIA Tesla T4 (15,360 MiB each), CUDA 12.8, cuDNN 9.2

- Framework: PaddlePaddle GPU + PaddleOCR

- Mode: Full fine-tuning, distributed data-parallel across both GPUs

- Checkpointing: best-on-validation H-mean auto-saved + every 10-epoch snapshot

- Eval cadence: every 500 steps on the validation split

Convergence behavior

The model converged fast and stably:

- Epoch 1: training loss dropped from 4.12 → 1.38 even while the LR was still warming up (from to ).

- Epoch ~20: validation H-mean already inside 1 point of the eventual best.

- Epoch 64: peak validation H-mean — the final exported checkpoint.

That a 500-epoch recipe peaks at 64 epochs on this domain is the strongest signal that fine-tuning, not training-from-scratch, is the right move.

Results

Headline metrics on the held-out NomNaOCR test set

| Metric | Baseline (PP-OCRv5_server_det) | Fine-tuned (ours) | |

|---|---|---|---|

| Precision | 0.713 | 0.966 | +0.253 |

| Recall | 0.750 | 0.937 | +0.187 |

| H-mean | 0.731 | 0.952 | +0.221 |

A baseline trained on Chinese/English/Japanese data already gets you to 0.731 H-mean — Sino-Nom characters share many radicals with Han Chinese, so the prior is non-trivial. The remaining 0.22 gap is the slope you only climb with domain-specific data.

Per-source breakdown

The test set is heterogeneous; performance is not uniform across the three NomNaOCR sub-corpora:

| Sub-corpus | Page condition | Detection quality |

|---|---|---|

| Truyện Kiều | Clean carved woodblock | Excellent |

| Lục Vân Tiên | Clean print | Excellent |

| Đại Việt Sử Ký Toàn Thư | Faded, bled-through ink, time-warped paper | Noticeably lower |

Best- and worst-case predictions illustrate the gap clearly: on Truyện Kiều, models routinely hit H-mean = 1.0 per-page; on the worst Đại Việt Sử Ký pages, H-mean can drop to 0.0 — entire columns are missed when the ink has faded into the substrate.

Limitations

The single biggest residual error source is not the detector itself — it is the missing image preprocessing pipeline that PP-OCRv5 ships with by default and that we did not wire up:

PP-LCNetfor document-orientation classificationUVDocfor de-warping pages whose paper has buckled or twisted with age

Without these, the detector sees rotated and warped pages as out-of-distribution. Adding the preprocessing chain is the next obvious step for closing the Đại Việt Sử Ký gap.

Warning (Honest assessment)

A 0.952 H-mean is a strong number, but it averages over easy and hard sub-corpora. A production OCR service for cultural-heritage digitization needs a triage layer: clean pages route to the fast detector, degraded pages route through preprocessing first. We did not build this layer — yet.

References

- Dang, H.-Q. et al. NomNaOCR: The First Dataset for Optical Character Recognition on Han-Nom Script. RIVF 2022.

- Dongguk University. The Archive of the Cultural Heritage of Buddhist Records (CWKB). https://kabc.dongguk.edu/

- Cui, C. et al. PaddleOCR 3.0 Technical Report. arXiv:2507.05595, 2025.

- Liao, M. et al. Real-time Scene Text Detection with Differentiable Binarization (DB). AAAI 2020.